The corporate world has always lived in cycles. Plan. Execute. Review. Adjust. Repeat, on a timeline measured in quarters, fiscal years, and strategic planning horizons. That model is collapsing.

Deloitte’s 2026 Global Human Capital Trends survey finds that 7 in 10 business leaders now define their primary competitive strategy as being fast and nimble, able to adapt rapidly to shifting customer, market, and workforce needs in real time. The S-curve of organizational growth, long a reliable map of how businesses rise, scale, and plateau, is compressing. AI and workforce transformation are accelerating the climb and pulling the plateau forward — forcing organizations to leap to the next curve before they have fully scaled the last one.

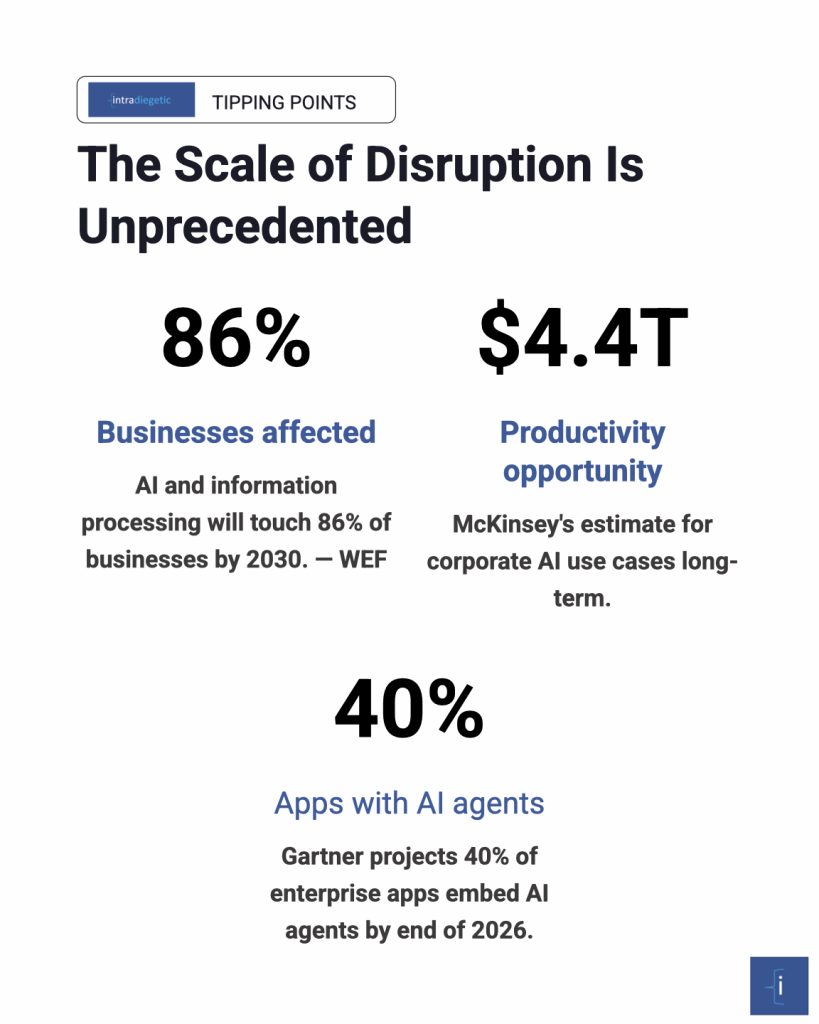

This is not a technology story. Or rather, it is not only a technology story. The World Economic Forum’s Future of Jobs Report 2025 projects that AI and information processing will affect 86% of businesses by 2030, transforming 1.1 billion jobs, displacing 92 million roles, and creating 170 million new ones. McKinsey estimates that corporate AI use cases hold a $4.4 trillion long-term productivity opportunity. Google Cloud calls 2026 “the year AI agents fundamentally reshape business.” And Gartner projects that 40% of enterprise applications will embed AI agents by the end of this year alone — up from less than 5% in 2025.

But the organizations setting the pace, Deloitte argues, are not the ones deploying the most AI. They are the ones who have made a distinctly human choice: to lead with the human edge. Technology is replicable. People, with their adaptability, creativity, and judgment under uncertainty, are not.

Competitive advantage now lives in the synthesis of human and machine, not in the machine alone.

Three tipping points are defining this moment. The first is the shift from human + machine to human x machine, where redesigning work, culture, and decision-making for genuine human–AI synergy becomes a strategic imperative. The second is the shift from cost efficiency to value creation, in which the obsession with headcount reduction must give way to investment in irreplaceable human capacity. The third is the shift from static plans to dynamic orchestration, where curiosity, perpetual learning, and real-time adaptability replace rigid job structures and predictable execution cycles.

Together, these three pivots are not adjustments to existing corporate communication and operating structures. They are their replacement. This is what Intradiegetic does.

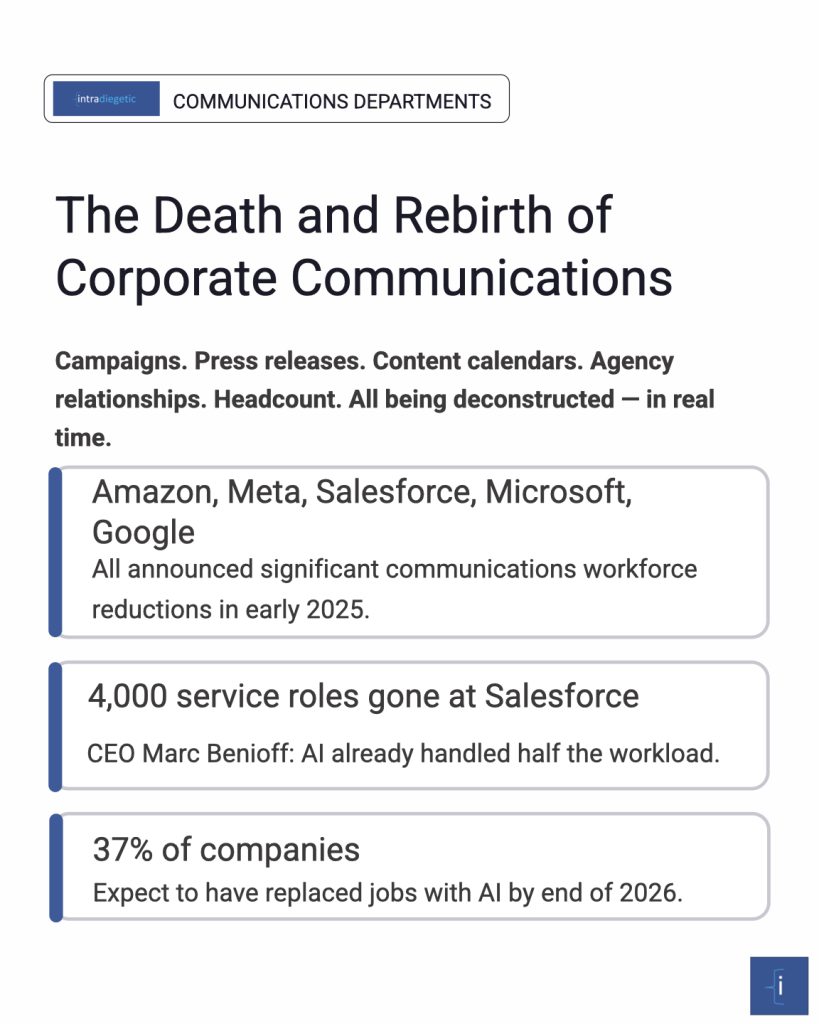

Corporate communication departments, as they have existed, organized around campaigns, press releases, content calendars, agency relationships, and headcount, are being deconstructed in real time. What replaces them is not yet fully clear, but the direction is unmistakable.

Amazon laid off dozens of employees from its communications department in January 2025 as part of a broader cost-cutting and AI-efficiency drive. Meta, Salesforce, Wayfair, Microsoft, and Google all announced significant workforce reductions in the same period. Salesforce’s CEO, Marc Benioff, noted that 4,000 customer service roles were eliminated because AI already handled half of the firm’s workload. These are not one-off restructurings. According to a 2025 Resume.org survey of 1,000 U.S. business leaders, nearly 3 in 10 companies have already replaced jobs with AI, and 37% expect to have done so by the end of 2026. McKinsey’s 2025 State of AI survey found that while a plurality of organizations saw little change in headcount in the prior year, a median of 30% of respondents across functions expect a workforce decrease in the year ahead due to AI.

Specifically, the pressure in communications is structural, not cyclical. AI agents today are not just writing first drafts. They are executing entire marketing operations workflows, building and maintaining CRM pipelines, automating media monitoring, generating performance reports, and distributing content across channels, with minimal human intervention. As one practitioner wrote on Reddit in early 2026, describing the transformation of internal communications: “We’re not in the content creation business anymore. We’re in the noise reduction business.” AI handles volume. The human communicator handles judgment, signal, and meaning.

PR agencies are feeling this acutely. Major global firms have announced layoffs as corporate CMOs reassess the return on investment of traditional agency relationships. Tasks that defined the agency model, media list building, press release drafting, coverage tracking, and first-round content production can now be done faster, cheaper, and at greater scale by AI tools. What cannot yet be automated is the relational intelligence, crisis instinct, narrative architecture, and cross-cultural strategic judgment that experienced communication leaders bring. But the middle layer, coordinators, junior analysts, second-level reviewers, and content executors, is at serious risk. As one enterprise AI practitioner observed in analyzing recent wave layoffs: “Organizations don’t eliminate the need for expertise; they eliminate the need for layers of it. One senior person plus an AI system can do what three people did before.”

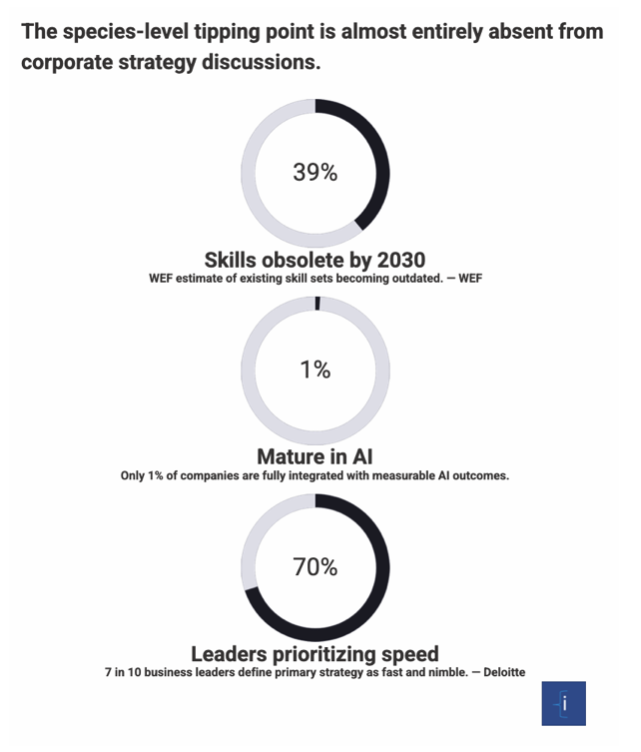

Over the next 18 months, depending on AI maturity by market and the pace of agentic AI deployment, the contraction in traditional communications headcount could be dramatic. The World Economic Forum estimates that 39% of existing skill sets will become outdated between 2025 and 2030. Communication functions built around execution are being hollowed out. What is being built in their place are leaner, higher-skill structures organized not around media types or content formats, but around the mission the organization needs to achieve — and the AI stack that enables it at the lowest cost.

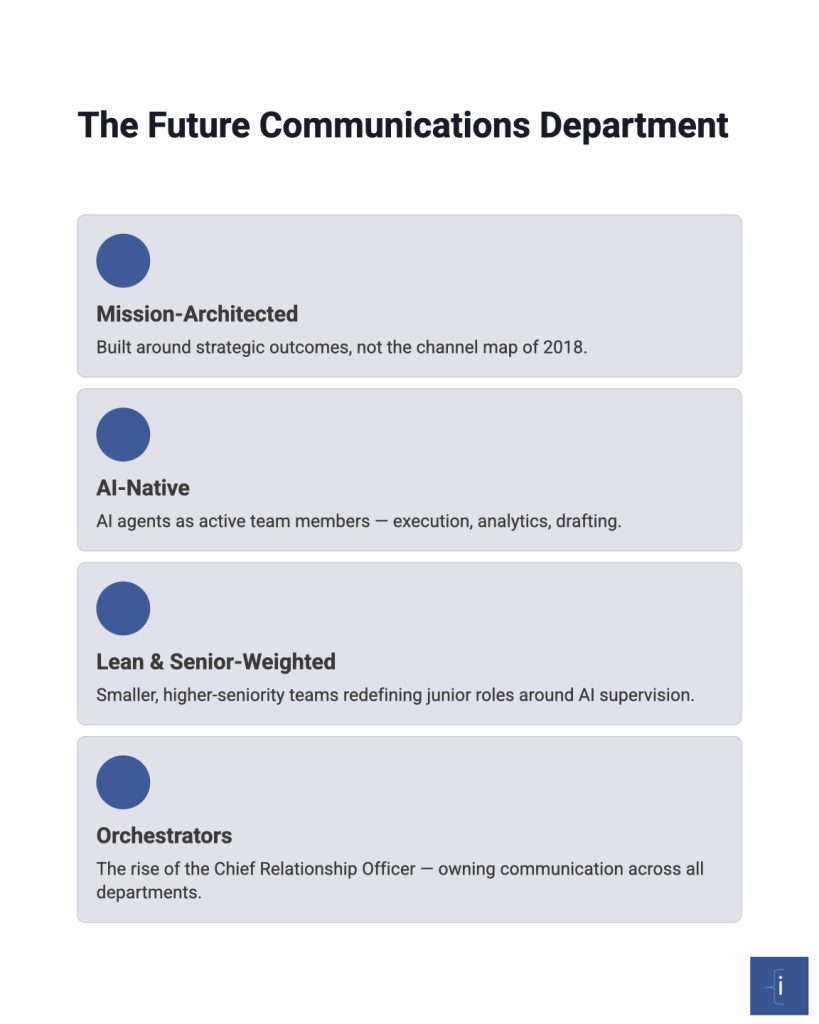

The implications are profound. Future communications departments will likely be:

The question for every communications leader is not whether this restructuring is coming. It is whether they will design it with intention or inherit the version their organization creates without them.

Deloitte, BCG, McKinsey, and the WEF are converging on the same structural conclusion: legacy global operating models are breaking down, and the replacement is more modular, outcome-based, and orchestrated.

BCG’s “Global Businesses Need a New Operating Model” argues that geopolitical realignment, advancing AI, and slowing growth are making centralized legacy models untenable. McKinsey’s operating model redesign framework emphasizes four pillars: aligned leadership, rewired core processes, deep investment in people, and a high-performing culture. Each of those pillars is, fundamentally, a communication design problem. How do leaders align if information flows are slow, siloed, or AI-generated without human oversight? How are core processes rewired if the communication architecture surrounding them remains built for a 2015 world?

Salesforce describes the emerging architecture as the “orchestrated workforce”, a model where a primary AI orchestrator directs specialized agents, much like a well-managed human team. In 2026, single AI agents are giving way to multi-agent systems, networks of specialized agents that collaborate, communicate, and complete complex workflows from start to finish. Gartner tracked a 1,445% surge in multi-agent system inquiries from Q1 2024 to Q2 2025 alone. Communication — between humans, between agents, between humans and agents — is the connective tissue of that system.

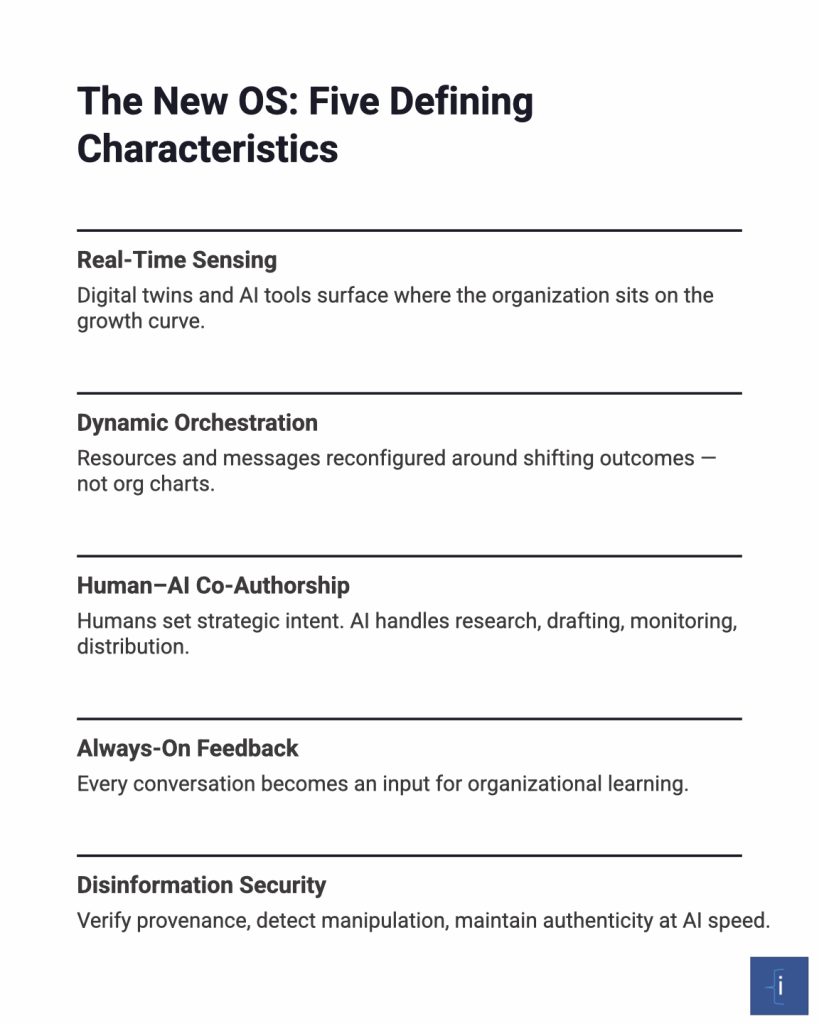

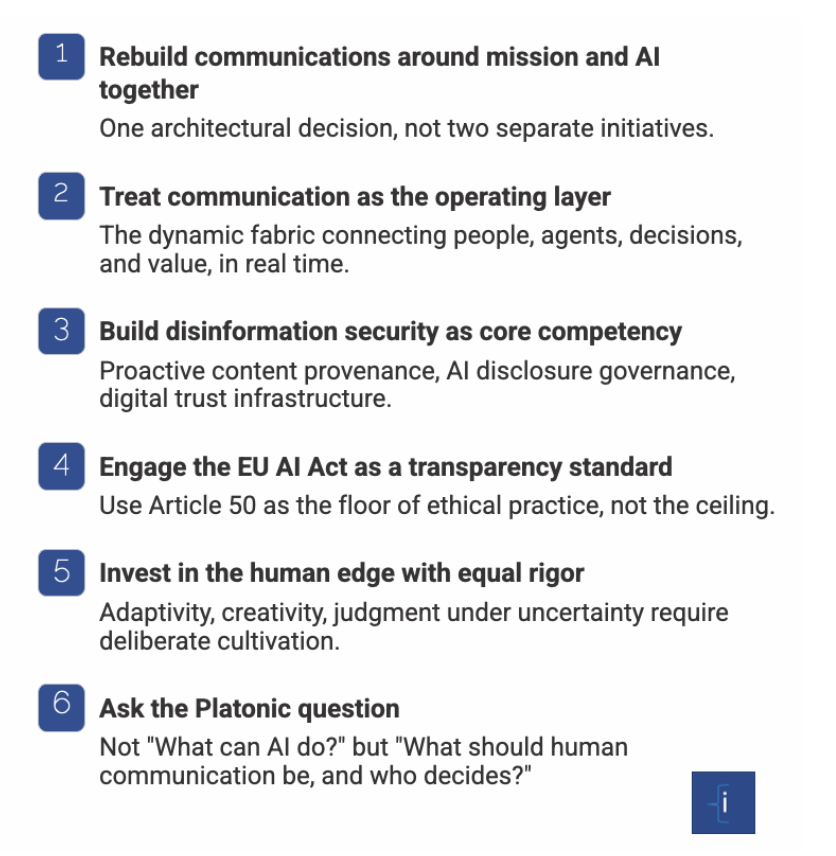

In this model, corporate communication is no longer a downstream function that explains what the organization has decided to do. It becomes the operating layer through which the organization senses change, aligns capabilities, and executes at speed. Strategy and execution are converging in the same conversations. The WEF calls for organizations to comprehensively transform their “workforce, operating models, and governance structures” together, not sequentially. That means communication must be designed into the operating model from the start, not bolted on at the end.

The new global communication operating system has several defining characteristics:

What about ethics and transparency, and how these fit within the EU AI Act, which is still unclear as either merely governance or a genuine Standard? Communication has always had an ethical dimension. What is new is that the machinery of communication is now itself capable of deceiving at scale and at a speed that outpaces most organizations’ ability to respond.

The World Economic Forum has ranked disinformation among the top 10 global risks, noting that AI has supercharged its spread through deepfakes, synthetic profiles, and bot-amplified narratives. Research dating back to 2018 found that false news stories are 70% more likely to be retweeted than true ones. Today, with generative AI enabling the creation of convincing fabrications at near-zero marginal cost, the dynamics of disinformation have become a boardroom crisis. Eight in 10 executives are concerned about the reputational damage that AI-driven disinformation can cause, while over a third admit their companies are not adequately prepared to identify and manage these threats.

The philosophical question underneath this is profound: Has the concept of “right” in communication changed? For decades, communication ethics have rested on principles derived from Aristotle’s rhetorical framework, the alignment of ethos (credibility), pathos (emotional resonance), and logos (logical soundness). The underlying assumption was that a human speaker was responsible for each of those elements, and that authenticity and accountability were traceable to a person. Now, when AI generates the content, who carries the ethos? When an algorithm optimizes for pathos without understanding, who is responsible for the emotional manipulation? When a large language model hallucinates a source to strengthen a logos argument, who is accountable for the lie?

These are not rhetorical questions. They are the operating questions of every communications leader in 2026. The PRSA updated its AI Ethics Guidelines in 2025 to make transparency its own dedicated section, stating: “Be transparent about the use of AI in most public relations practices. Clearly disclose when content, decisions, or interactions are significantly influenced or generated by AI, especially when this information could impact how messages are perceived, how relationships are built, and how trust is maintained.” The principle is clear. The gap between principle and practice is vast.

Enter the EU AI Act. The Act’s Article 50 transparency obligations become fully enforceable on 2 August 2026, months from now. The requirements are specific and significant. Companies must inform users when they are interacting with an AI system. AI-generated content, including deepfakes and text intended to inform the public on matters of public interest, must be clearly and visibly labeled. A second draft Code of Practice mandating multilayered labeling with metadata, watermarking, and visible indicators across media types is currently in public consultation until March 30, 2026, with final rules expected by June. Fines for violations reach up to €35 million or 7% of global annual turnover.

Does the EU AI Act represent a genuine ethical standard or bureaucratic overreach? The honest answer is: both, and the distinction matters less than the direction. The Act will almost certainly become the “GDPR of AI”, a standard initially resisted and eventually adopted globally, not because every provision is perfectly calibrated, but because the underlying demand for transparency and accountability is legitimate and irreversible. Critics argue, with some justification, that regulators are attempting to govern a technology they do not fully understand, creating compliance overhead that burdens smaller organizations disproportionately and risks slowing European AI adoption relative to the US and China. There is truth in that concern. Regulators are structurally always one generation behind the technology they regulate.

But the deeper ethical shift the Act represents is not about compliance checklists. It is about a fundamental question: in a world where AI can simulate trust, manufacture credibility, and produce the appearance of authenticity without its substance, what does honest communication even mean? Oxford’s AI Ethics Institute argues that the most compelling framework for these questions comes from Aristotle himself: AI systems should be “intelligent instruments” that enhance our ability to flourish as individuals and communities, advancing the prosocial use of reason and communication rather than replacing or gaming it. That is a philosophical standard no regulation fully captures, but one that every communicator must internalize.

This is not merely a philosophical parlor game. It goes to the deepest question of what the new communication operating system means for us as a species.

In the Phaedrus, Plato has Socrates tell the story of the Egyptian god Theuth presenting writing to the king Thamus as an invention that would make people wise. Thamus rejects it: writing will give people only the appearance of wisdom, he argues, not its reality. They will remember less, mistake the record for the thought, and confuse access to information with genuine understanding. That small dialogue is, as one scholar notes, the first argument about information technology. Every age since has replayed it. The printing press, photography, television, and the internet each offered a cure and, alongside it, delivered a new pathology. Plato’s term for this duality was pharmakon: remedy and poison inseparably bound.

Generative AI is the most potent pharmakon in history.

Plato would have been deeply skeptical of AI, not on Luddite grounds, but on philosophical ones. In Republic Book X, he warns against imitation: the imitator “knows nothing of true existence; he knows appearances only. By this standard, AI is the ultimate imitator. Rearranging human-created content into something that resembles original thought but is, at its core, a statistically sophisticated remix of what already exists. It produces, at breathtaking scale, the shadow on the wall of the cave, not the form itself. Plato believed that knowledge lived in living dialogue, the give-and-take of minds genuinely wrestling with ideas, not in the transmission of pre-formed outputs. AI can sustain dialogue with remarkable fluency. Whether it can participate in its substance is the defining open question of our moment.

Yet Plato’s own practice complicates his warning. He used writing, the very technology Socrates distrusted, to preserve his teacher’s voice and make philosophy transmissible across millennia. The irony is deliberate and self-aware: a critique of writing that also defends it. Had Plato had access to AI, it is entirely plausible that he would have used it, as he used writing, while insisting on the primacy of the dialectical mind behind it. The tool is not the problem. The atrophying of the mind that outsources its thinking to the tool is the problem.

Cornell psychologist Robert Sternberg has stated that AI “has already begun” to undermine human creativity and intelligence, not as a future risk, but as a present reality. Studies of the Flynn effect, the documented generational rise in IQ that characterized most of the 20th century, suggest it has slowed or reversed in recent decades, with average IQ scores among 14-year-olds in the UK falling by over two points between 1980 and 2008. The rise of GPS navigation has measurably degraded spatial reasoning skills. The smartphone era has degraded sustained attention. The generative AI era may be degrading the capacity for original synthesis, the very core of homo sapiens.

This is the deepest tipping point of all, and it is almost entirely absent from discussions of corporate communication strategy. If the communications operating system being built today, AI-driven, efficiency-optimized, cost-minimized, systematically replaces human thinking with machine output at scale, it may be producing something that is structurally indistinguishable from what Plato feared writing would produce: an organization full of people who appear to communicate, appear to understand, appear to decide, but who have delegated the actual cognitive work to an imitation of thought.

Aristotle’s framework offers a more hopeful path. The Oxford AI Ethics Institute articulates an Aristotelian vision in which AI serves as an “intelligent instrument” that advances the prosocial use of reason and communication, not as a replacement for human judgment, but as a means of extending it. In this model, the value of AI in communication lies not in replacing the communicator’s voice, but in giving that voice greater reach, precision, and responsiveness than any individual could achieve alone. The human remains the author of the ethos, the credibility, character, and values that make communication trustworthy.

Are we ready for this? The honest answer is no. At least, not yet, and not uniformly. The WEF estimates that 39% of existing skill sets will become outdated between 2025 and 2030. Only 1% of companies consider themselves “mature” in AI deployment, meaning fully integrated into workflows with measurable outcomes. Most organizations are still adding AI to old structures rather than redesigning structures around AI’s capabilities. And the communications profession, the function most directly responsible for how organizations make meaning, build trust, and create shared understanding, is among the least institutionally equipped to lead this transition.

The species-level question is not whether AI will transform communication. It already has. The question is whether we will treat that transformation as a design challenge, intentional, human-directed, governed by a philosophy of what communication is for, or as something that simply happens to us, shaped by efficiency mandates, cost pressures, and the default settings of the tools we adopt.

Plato warned that writing gave people the appearance of wisdom without its reality. He was right to warn. He was also wrong to believe writing was the threat. The threat was always the same: mistaking the tool for the thought. The challenge, in 2026 as in ancient Athens, is to remain the author of your own mind, even when a very persuasive imitation of it is available at the click of a button.

The synthesis across Deloitte, WEF, BCG, McKinsey, emerging AI-communications research, and two and a half millennia of philosophy is surprisingly coherent. Technology, including AI, has never been the differentiating factor in human flourishing. Intentionality has.

Organizations that will set the benchmark in the next three to five years will be those that:

The new global communication operating system is being installed right now, in real time, across every organization that has adopted an AI writing tool, deployed a customer-facing chatbot, restructured a communications team, or used an algorithm to personalize a message.

The question is not whether you are part of this transition. You already are. The question is whether you are designing it with intention, philosophy, and a clear-eyed view of both the promise and the pharmakon.

The next curve is not on the horizon. It is unfolding in this conversation.

This article was written by Paul Floren as part of a larger project focused on restructuring communication departments around AI systems. It draws research done by and at Intradiegetic as well as on Deloitte’s 2026 Global Human Capital Trends, the World Economic Forum’s Future of Jobs Report 2025 and 2026 AI coverage, BCG and McKinsey operating model research, Salesforce and Google Cloud 2026 AI Agent research, EU AI Act Article 50 obligations, PRSA AI Ethics Guidelines 2025, and the philosophical frameworks of Plato and Aristotle as interpreted through contemporary AI ethics scholarship.

Leave a Reply

You must be logged in to post a comment.